What if technology could bridge the gap between spoken language and sign language, empowering millions of people to communicate more seamlessly? With advancements in deep learning, this vision is no longer a distant dream. Imagine a real-time system that detects hand gestures with precision, translating them into meaningful insights for broader accessibility. Enter the Sign Language Detection Transformer, a innovative approach using the power of DETR (DEtection TRansformer). Whether you’re a developer eager to explore new frontiers or an advocate for inclusivity, this fantastic tool offers a unique opportunity to combine innovation with impact.

In this guide, Nicholas Renotte takes you through the step-by-step process of building your own sign language detection system, from preparing diverse datasets to deploying a real-time model. Along the way, you’ll learn how DETR’s pre-trained weights and Hungarian matching algorithm simplify complex tasks, making this project achievable even on modest hardware. But this isn’t just about technology, it’s about creating tools that foster connection and understanding. By the end, you’ll not only have a functional detection system but also a deeper appreciation for how AI can make the world more accessible. So, how can you turn this vision into reality? Let’s explore.

Real-Time Sign Language Detection

TL;DR Key Takeaways :

- DETR (DEtection TRansformer) is a state-of-the-art object detection model combining convolutional neural networks and transformer layers, making it highly effective for real-time sign language detection.

- Pre-trained weights and the Hungarian matching algorithm in DETR significantly reduce training time, computational demands, and improve accuracy during training.

- Data preparation, including collecting diverse images, annotating gestures, and formatting data, is crucial for building a robust detection system.

- Training involves using PyTorch, pre-trained weights, hyperparameter tuning, and data augmentation to optimize the model for high accuracy and generalization.

- Real-time detection systems can be deployed on standard laptops, with options to expand gesture classes and adapt to different sign languages, promoting inclusivity and accessibility.

Understanding DETR

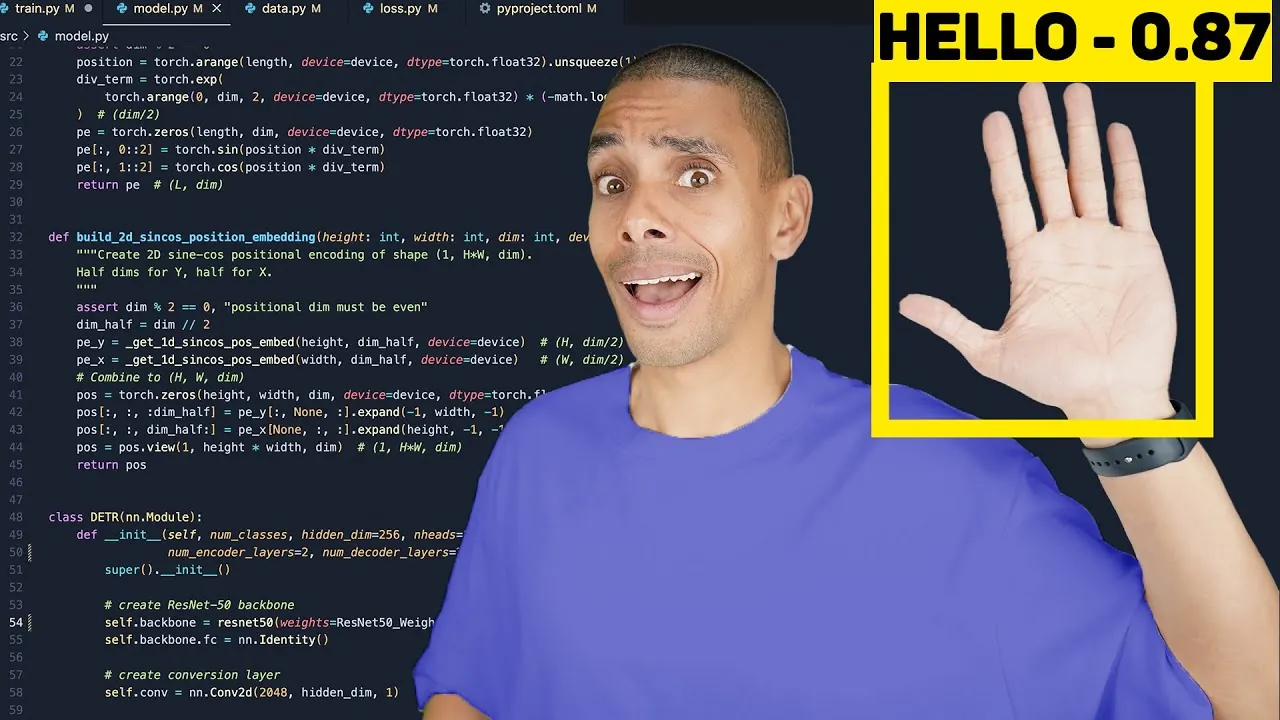

DETR is a innovative object detection model that integrates convolutional neural networks with transformer layers. Its backbone, ResNet-50, extracts essential features from input images, while the transformer layers classify objects and predict bounding boxes. This combination makes DETR particularly effective for detecting hand gestures in sign language.

Key features of DETR include:

- Pre-trained weights: These significantly reduce training time and computational demands by using knowledge from large-scale datasets.

- Hungarian matching algorithm: This ensures optimal alignment between predicted outputs and ground truth annotations during training, enhancing accuracy.

This architecture’s flexibility and precision make it an ideal choice for building robust sign language detection systems.

Step 1: Preparing Your Data

Data preparation is the foundation of any successful detection model. To build a reliable system, follow these essential steps:

- Collect images or video frames: Use a standard webcam or source data from publicly available datasets to capture a variety of sign language gestures. Ensure diversity in lighting, angles, and backgrounds to improve model robustness.

- Annotate the data: Tools like Label Studio allow you to draw bounding boxes around hand gestures in each image, creating the ground truth annotations required for training.

- Format the data: Convert your annotations into the YOLO format, which organizes the data into structured text files compatible with DETR’s training pipeline.

Properly annotated and formatted data ensures the model learns effectively, minimizing errors during training and testing.

Building a Sign Language Detection AI System

Take a look at other insightful guides from our broad collection that might capture your interest in AI apps.

Step 2: Training the Model

With your data prepared, the next step is training the model using PyTorch, a widely adopted deep learning framework. Here’s how to proceed:

- Load pre-trained weights: Start with DETR’s pre-trained weights to save time and computational resources while improving initial performance.

- Set hyperparameters: Configure parameters such as learning rate, batch size, and the number of training epochs to optimize the model’s performance.

- Monitor progress: Track metrics like accuracy and loss during training. Save checkpoints periodically to safeguard your progress and avoid data loss.

- Apply data augmentation: Techniques such as flipping, rotation, and scaling enhance the model’s ability to generalize, especially when working with smaller datasets.

DETR’s loss function combines classification loss, bounding box regression loss, and generalized intersection over union (GIoU) to ensure precise predictions. This multi-faceted approach helps the model achieve high accuracy in detecting and classifying gestures.

Step 3: Testing and Real-Time Detection

Once the training phase is complete, evaluate the model’s performance on a separate test dataset to ensure it generalizes well to unseen data. For real-time detection, connect a webcam and deploy the trained model. The system will process live video frames, displaying bounding boxes and class labels for detected gestures.

To enhance usability and reliability:

- Adjust the confidence threshold: Fine-tune this setting to filter out low-confidence predictions, making sure only accurate detections are displayed.

- Optimize the setup: Ensure your webcam is properly configured and positioned to avoid interruptions or inaccuracies during detection.

Real-time detection systems can be further refined by incorporating user feedback and testing under various conditions to improve performance.

Technical Insights and Practical Applications

DETR’s architecture is both versatile and scalable, making it suitable for a wide range of applications beyond sign language detection. Key technical highlights include:

- Hungarian matching algorithm: This ensures precise alignment between predictions and ground truth annotations, even when the number of objects varies across images.

- Data augmentation: By simulating diverse scenarios, augmentation techniques improve the model’s ability to handle variations in lighting, orientation, and background noise.

One of the most appealing aspects of DETR is its accessibility. You can train and test the model on a standard laptop without requiring a dedicated GPU. The training pipeline is also highly customizable, allowing you to:

- Add new gesture classes to expand the system’s capabilities.

- Adapt the model to different sign languages, making it versatile for various linguistic contexts.

If challenges arise during the process, community forums and troubleshooting guides offer valuable support. Additionally, optimizing your hardware and software configurations ensures smooth real-time detection and deployment.

Empowering Communication Through Technology

By following this guide, you can develop a functional sign language detection system using DETR. The combination of advanced architecture, pre-trained weights, and user-friendly tools like Label Studio makes the process accessible to individuals with varying levels of expertise in deep learning. With minimal resources and a clear workflow, you can contribute to fostering inclusivity and accessibility in communication through innovative technology. This project not only highlights the potential of deep learning but also underscores its practical applications in creating a more connected and understanding world.

Media Credit: Nicholas Renotte

Filed Under: AI, DIY Projects, Guides

Latest Geeky Gadgets Deals

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Credit: Source link