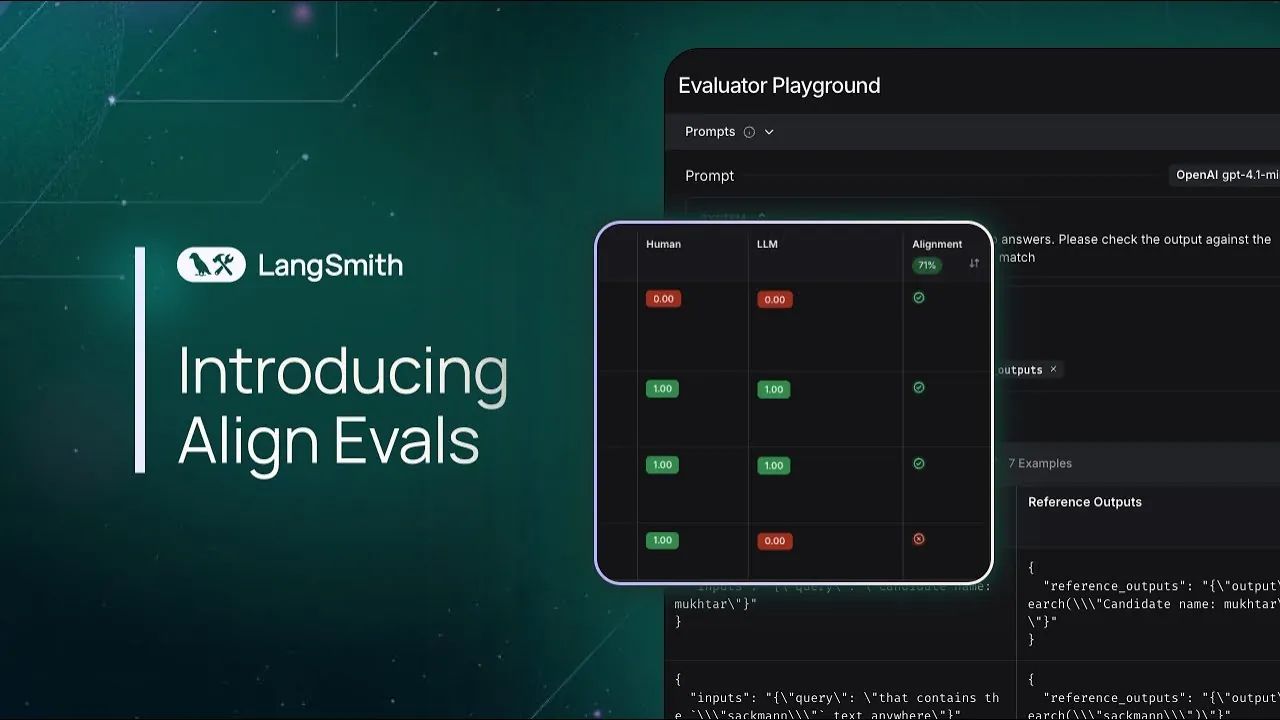

What if evaluating the performance of large language models (LLMs) could be as precise and seamless as setting a GPS to your destination? With the rapid rise of LLM applications in everything from creative writing to technical problem-solving, making sure these models meet user expectations has become a critical challenge. Yet, traditional evaluation methods often feel like navigating uncharted terrain—time-consuming, inconsistent, and prone to misalignment between machine outputs and human judgment. Enter Align Evals, a new feature introduced by Langsmith, designed to bring clarity and structure to the evaluation process. By aligning machine-generated assessments with human-labeled benchmarks, Align Evals promises not only greater accuracy but also a streamlined workflow that enables users to refine their applications with confidence.

LangChain explain how Align Evals transforms the way developers and researchers evaluate LLM-generated outputs. From its ability to detect and resolve misalignments to its iterative prompt refinement tools, Align Evals offers a comprehensive framework for achieving consistency and reliability in LLM applications. Whether you’re perfecting recipe titles or tackling complex technical content, Align Evals adapts to your unique scoring criteria, making sure your outputs align with human expectations. By the end, you’ll discover how this tool not only saves time but also enhances the quality of your applications, bridging the gap between innovation and precision. The question is: how will you harness its potential?

Streamlining LLM Evaluations

TL;DR Key Takeaways :

- Align Evals is a feature by Langsmith designed to align machine-generated evaluations with human-labeled data, making sure greater accuracy, reliability, and adherence to user-defined criteria.

- The tool follows a structured workflow, including gathering sample outputs, human labeling, and iterative prompt refinement, to dynamically improve LLM evaluations.

- Key features include evaluator creation, iterative prompt refinement, misalignment detection, and progress tracking, providing a robust framework for consistent and efficient evaluations.

- Align Evals adapts to various scoring criteria, making it suitable for diverse applications, such as evaluating creative content, technical outputs, or user-facing text.

- Inspired by Eugene Yan’s research, Align Evals prioritizes accessibility and precision, offering developers and researchers a powerful tool to enhance the quality and reliability of LLM applications.

The Purpose and Role of Align Evals

Align Evals is built to make the evaluation of LLM outputs both accessible and precise. Its primary objective is to determine whether machine-generated content meets specific scoring criteria by comparing it to human-labeled benchmarks. This alignment process minimizes discrepancies, ensures evaluations reflect human judgment, and ultimately enhances the overall quality of LLM outputs. By bridging the gap between human expectations and machine-generated results, Align Evals enables users to create more reliable and consistent applications.

How the Align Evals Workflow Operates

The workflow of Align Evals is designed to simplify the evaluation process while maintaining flexibility and adaptability. It follows a structured, step-by-step approach that includes:

- Gathering representative sample runs: Collect outputs from your LLM application that represent the range of its performance.

- Labeling samples with human expertise: Use human input to create a reliable benchmark for evaluation.

- Iterative refinement of prompts: Continuously adjust and refine prompts to ensure the LLM’s evaluations align with human-labeled data.

This iterative process ensures that the evaluation remains dynamic, allowing you to adapt as your application evolves. By following this workflow, you can identify and address inconsistencies, making sure that your LLM application meets the desired standards.

How Align Evals Improves Large Language Model Performance

Here are more detailed guides and articles that you may find helpful on LLM evaluation.

Handling Evaluations and Scoring Criteria

Align Evals enables you to use the LLM itself as a judge to score outputs against predefined criteria. For example, if you are evaluating recipe titles, you might establish a rule to avoid unnecessary adjectives or overly complex phrasing. By iteratively refining prompts and evaluators, Align Evals ensures the scoring process aligns with your specific standards. This approach not only enhances the accuracy of evaluations but also helps identify and resolve misalignments effectively.

The tool’s ability to adapt to different scoring criteria makes it suitable for a wide range of applications. Whether you are evaluating creative content, technical outputs, or user-facing text, Align Evals provides the flexibility needed to meet your unique requirements.

Key Features of Align Evals

Align Evals is equipped with a comprehensive set of tools designed to support and streamline the evaluation process. These features include:

- Evaluator creation and modification: Build, test, and refine evaluators to assess LLM outputs effectively.

- Iterative prompt refinement: Continuously improve prompts to align machine evaluations with human-labeled benchmarks.

- Misalignment detection and resolution: Identify discrepancies between machine and human evaluations and address them systematically.

- Progress tracking tools: Monitor alignment improvements over time to ensure consistent evaluation quality.

These features work together to provide a robust framework for evaluating LLM applications. By using these tools, users can achieve greater consistency, accuracy, and efficiency in their evaluation processes.

A Practical Example: Evaluating Recipe Titles

To illustrate the functionality of Align Evals, consider a scenario where you are tasked with evaluating recipe titles. Your goal might be to ensure that the titles are concise, clear, and free from unnecessary adjectives. Using Align Evals, you can follow these steps:

- Define the evaluation criteria: Establish clear rules, such as avoiding overly descriptive language or making sure brevity.

- Label sample titles with human input: Create a benchmark by labeling a set of sample titles according to the defined criteria.

- Refine the LLM’s evaluation prompts: Adjust prompts iteratively until the LLM’s scoring aligns with your expectations.

This process not only saves time but also ensures that the evaluation outcomes are consistent and aligned with your goals. By automating parts of the evaluation while maintaining human oversight, Align Evals strikes a balance between efficiency and accuracy.

Inspiration and Availability

Align Evals draws inspiration from Eugene Yan’s research on “Align Eval,” which emphasizes the importance of aligning LLM evaluations with human preferences. Now widely available, Align Evals offers a user-friendly interface and a suite of powerful tools to enhance the evaluation process. Its design prioritizes accessibility and precision, making it an invaluable resource for developers and researchers working with LLM applications.

By incorporating insights from research and practical use cases, Align Evals provides a reliable and adaptable solution for evaluating machine-generated outputs. Its availability ensures that users across various industries can benefit from its capabilities, improving the quality and reliability of their LLM applications.

Enhancing LLM Applications with Align Evals

Align Evals represents a significant advancement in the evaluation of LLM-generated outputs. By aligning machine evaluations with human-labeled data, it ensures greater accuracy, reliability, and consistency. Whether you are refining prompts, addressing misalignments, or defining specific scoring criteria, Align Evals offers a structured and efficient solution to meet your needs. With its robust features and intuitive design, this tool enables users to align LLM-generated content with human preferences, streamlining the evaluation process and enhancing the quality of applications.

Media Credit: LangChain

Filed Under: AI, Top News

Latest Geeky Gadgets Deals

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Credit: Source link