What if the success of your AI application hinged on just three steps? In a world where large language models (LLMs) are reshaping industries, the ability to effectively evaluate their performance is more critical than ever. Yet, many teams struggle to move beyond vague benchmarks or generic testing methods, leaving their systems vulnerable to inconsistency and misalignment. The truth is, a structured evaluation process can mean the difference between an AI that delivers reliable, real-world results and one that falls short of expectations. This report offers a clear, actionable framework to help you navigate this challenge with confidence.

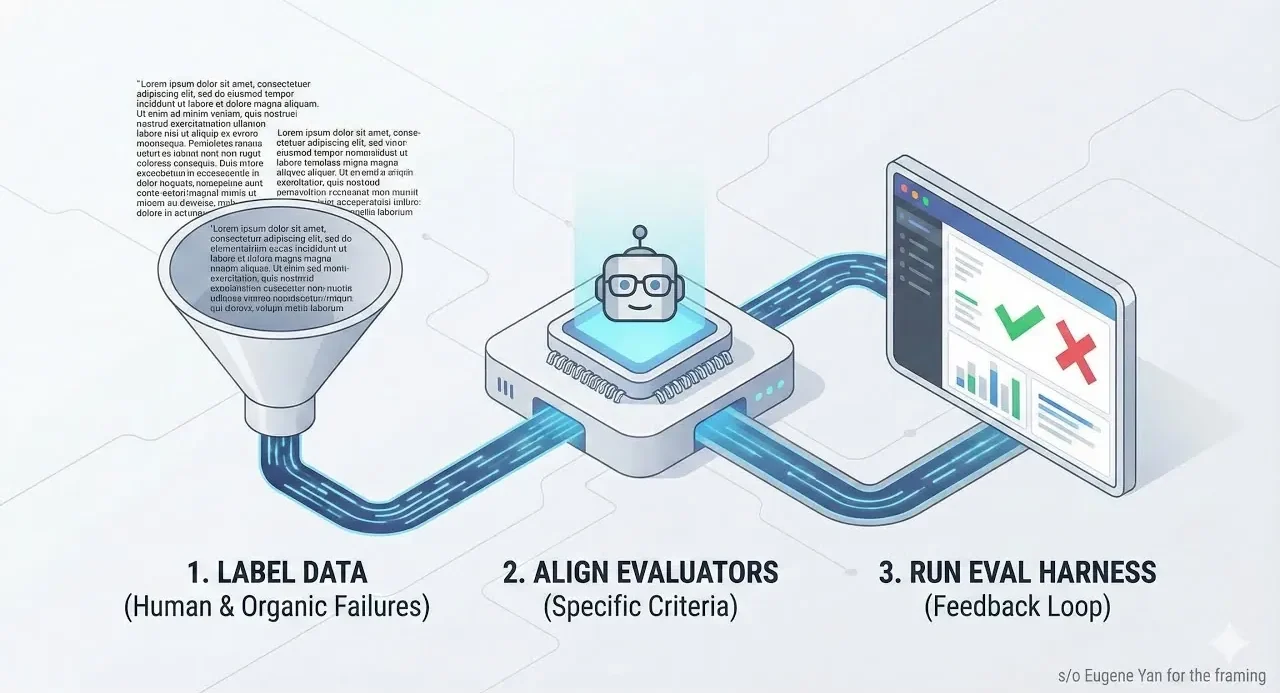

In the following sections, LangChain take you through a three-step methodology designed to streamline the evaluation of AI applications. From crafting a purpose-built dataset to aligning evaluators and conducting iterative tests, each step builds on the last to ensure your system evolves with precision and purpose. Whether you’re fine-tuning outputs to meet stylistic goals or addressing performance gaps, this process equips you with the tools to refine your application and adapt to changing demands. By the end, you’ll not only understand how to evaluate AI effectively but also gain insights into creating systems that are as dynamic as the challenges they’re built to solve.

Effective LLM Evaluation Guide

TL;DR Key Takeaways :

- Effective evaluation of large language model (LLM) applications involves a structured three-step process: labeling a dataset, aligning the evaluator, and conducting iterative tests.

- Creating a purpose-built dataset with clear labeling criteria ensures the evaluation process aligns with specific goals and defines measurable success for the application.

- Aligning the LLM evaluator with defined criteria helps refine outputs to meet stylistic, tonal, and functional expectations, requiring ongoing adjustments as needs evolve.

- Iterative testing with an evaluation harness allows for continuous improvement by identifying inconsistencies, expanding datasets, and refining system performance over time.

- Customization and iteration are essential for adapting the evaluation process to unique application requirements, making sure reliable and high-quality results tailored to real-world demands.

Step 1: Label a Small, Purpose-Built Dataset

The foundation of any effective evaluation process begins with creating a dataset that mirrors your specific use case. For instance, if your goal is to transform lengthy blog posts into concise, professional tweets, your dataset should include examples of both the input (long-form content) and the desired output (short, polished tweets). Label this dataset with clear, binary criteria, such as “pass” or “fail”—to determine whether the output meets your expectations.

Why is this step essential?

- It establishes a clear and measurable definition of success for your application.

- It ensures the evaluation process is directly aligned with your unique goals and requirements.

- It avoids reliance on generic or overly broad evaluation standards that may not suit your needs.

For example, if maintaining a professional tone is a priority, your labeling criteria should explicitly reflect this requirement. By creating precise and purpose-driven labels, you lay a strong foundation for the evaluation process, making sure that subsequent steps are focused and effective.

Step 2: Align the LLM Evaluator

After labeling your dataset, the next step is to align the LLM evaluator with your defined criteria. This involves training the evaluator to recognize and prioritize the outcomes you’ve outlined. Misalignments can occur at this stage, but they can be addressed through careful refinement and iteration.

How can alignment be achieved?

- If the evaluator generates outputs that sound overly promotional or artificial, adjust the evaluation criteria to discourage such results.

- If the outputs fail to meet stylistic or tonal guidelines, refine the evaluator’s parameters to better match your expectations.

This step is not a one-time task. As new challenges emerge or requirements evolve, you may need to revisit and adjust your criteria. The ultimate goal is to ensure the evaluator consistently produces outputs that meet your standards, whether that involves avoiding AI-like phrasing, adhering to specific stylistic preferences, or maintaining a particular tone.

Product Evals (for AI Applications) in Three Simple Steps

Take a look at other insightful guides from our broad collection that might capture your interest in Large Language Models (LLMs).

Step 3: Test Iteratively with an Evaluation Harness

The final step in the evaluation process is to test your system using an evaluation harness. This involves running prompts through the LLM and assessing the outputs against your established criteria. Each modification, whether it’s a new prompt, a model adjustment, or a parameter tweak, should be tested to evaluate its impact on performance.

Why is iterative testing crucial?

- It allows you to identify inconsistencies or shortcomings in the system’s outputs and address them promptly.

- It provides opportunities to expand your dataset with additional examples, especially if the system struggles with specific types of inputs.

Feedback loops play a critical role in this phase. By analyzing the results of each test, you can pinpoint areas for improvement and make targeted adjustments. For example, if a configuration change leads to inconsistent outputs, you can refine the prompts or retrain the evaluator to resolve the issue. This iterative approach ensures that your system evolves over time, adapting to new requirements and improving its overall reliability.

The Role of Iteration in Continuous Improvement

Evaluating an LLM application is not a one-time effort. It requires ongoing iteration to adapt to changing needs and improve performance. As your application evolves, earlier steps, such as labeling additional data or refining the evaluator, may need to be revisited. This iterative process builds confidence in the system’s ability to perform reliably in diverse, real-world scenarios.

Customization: Adapting the Process to Your Needs

Every LLM application is unique, and the evaluation process should reflect this individuality. Customization is key to making sure the system meets your specific objectives. Tools like Langsmith can simplify this process by offering features for data labeling, evaluator alignment, and iterative testing. These tools enable you to tailor the evaluation process, making it easier to achieve your desired outcomes while maintaining efficiency and precision.

By following this structured, three-step methodology, labeling a purpose-built dataset, aligning the evaluator, and conducting iterative tests, you can systematically evaluate and refine your AI application. This approach ensures continuous improvement, allowing your system to adapt to evolving requirements and deliver reliable, high-quality results tailored to your needs.

Media Credit: LangChain

Filed Under: AI, Technology News, Top News

Latest Geeky Gadgets Deals

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Credit: Source link