Are transformers really the pinnacle of AI innovation, or are they just an overengineered way to solve simple problems? Prompt Engineering explores how the innovative DeepSeek Engram challenges the dominance of transformer-based architectures by proposing a bold alternative: treating transformers as little more than expensive hashmaps. This provocative claim stems from Engram’s ability to separate straightforward recall tasks from complex reasoning, introducing a smarter, more efficient way to handle language model computation. By rethinking how tasks are processed, Engram not only reduces computational waste but also redefines what scalability and speed can look like in large language models.

In this overview, we’ll break down the core innovations behind Engram, including its use of hash-based lookups for simple memory tasks and its context-aware gating mechanism for deeper reasoning. You’ll discover how this architecture minimizes GPU load, improves latency, and challenges the inefficiencies of traditional transformers. But it’s not all smooth sailing, Engram’s reliance on static lookup tables and potential hash collisions raises important questions about its adaptability and precision. Could this be the future of AI, or just a stepping stone to something greater? Let’s explore the implications and limitations of this new approach.

DeepSeek Engram’s LLM Breakthrough

TL;DR Key Takeaways :

- Engram introduces a novel architecture for large language models (LLMs) that separates simple recall tasks from complex reasoning, optimizing computational efficiency and scalability.

- Static memory tasks, like fact retrieval, are handled using hash-based lookups, while dynamic reasoning tasks use transformer layers for deeper computation.

- Engram’s design reduces GPU load by storing lookup tables in system RAM, minimizing hardware costs and improving deployment efficiency.

- Performance benchmarks show significant gains in both knowledge recall and reasoning tasks, allowing faster and more accurate processing without increasing transformer layers.

- Despite its advantages, Engram faces challenges such as hash collisions, reliance on static lookup tables, and limitations in handling highly specialized or complex patterns.

The Problem with Transformers

Transformers, the foundational architecture behind many LLMs, apply the same computational effort to all tasks, regardless of complexity. Whether retrieving a straightforward fact like “Paris is the capital of France” or solving a challenging reasoning problem, transformers treat these tasks equally. This uniform approach leads to inefficiencies: simple recall tasks unnecessarily engage multiple transformer layers, inflating computational costs and limiting scalability. As LLMs grow larger and more complex, these inefficiencies become increasingly problematic, hindering their ability to operate effectively in real-world scenarios.

Engram’s Innovative Solution

Engram introduces a novel architecture that addresses these inefficiencies by incorporating a conditional memory mechanism. This mechanism separates static memory tasks, such as fact retrieval, from dynamic reasoning tasks that require deeper computation. The key components of this system include:

- Static Memory Tasks: Simple recall tasks are handled using hash-based lookups, bypassing the need for deep computation and conserving resources.

- Dynamic Reasoning: Complex tasks requiring nuanced understanding are processed by transformer layers, making sure accurate and context-aware responses.

- Context-Aware Gating: A gating mechanism ensures that retrieved memory aligns with the task’s context, maintaining both accuracy and efficiency.

By allocating computational resources based on task complexity, Engram achieves a balance between speed and accuracy, making it a more efficient alternative to traditional transformer-based models.

DeepSeek Engram : Transformers Just Expensive Hashmaps

Enhance your knowledge on DeepSeek AI by exploring a selection of articles and guides on the subject.

How Engram Works

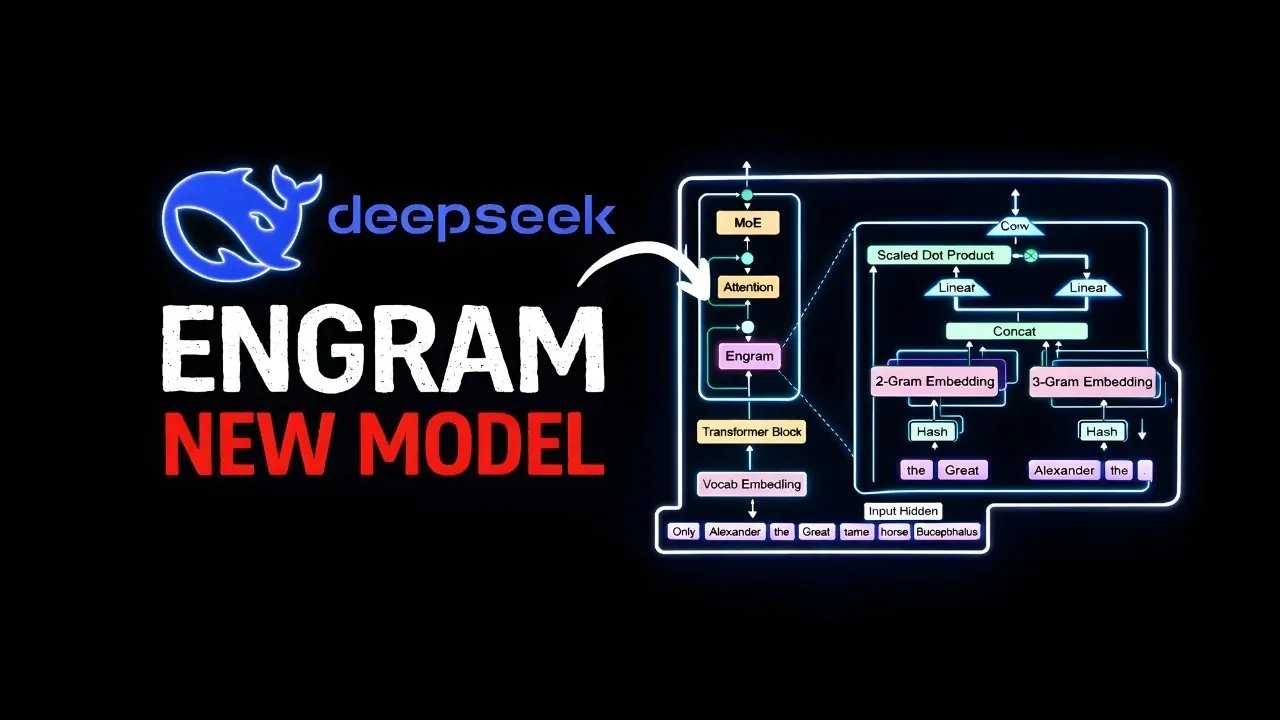

Engram’s architecture uses token combinations, or n-grams, extracted from input text to optimize task processing. These n-grams are hashed to retrieve embeddings from a pre-trained lookup table stored in system RAM. The process unfolds as follows:

- N-grams are generated from the input text, capturing key patterns and sequences.

- The n-grams are hashed to locate relevant embeddings in a pre-trained lookup table.

- A gating mechanism evaluates the relevance of the retrieved embeddings based on the task’s specific context.

This design offloads static memory tasks to hash-based lookups, freeing up transformer layers for tasks requiring deeper reasoning. By reducing the computational burden on transformers, Engram not only improves efficiency but also minimizes latency during inference, allowing faster and more responsive performance.

Performance Gains

Engram delivers significant performance improvements across a variety of benchmarks, demonstrating its effectiveness in optimizing LLM computation. Key areas of improvement include:

- Knowledge Recall: Tasks such as MMLU and ARC AGI benefit from enhanced accuracy and reduced computational overhead, showcasing Engram’s ability to handle static memory tasks efficiently.

- Reasoning Tasks: Complex problems, including mathematical reasoning and logical inference, see improved performance due to the freed-up transformer capacity.

By reallocating resources to focus on complex reasoning, Engram enables deeper and more accurate processing without increasing the number of transformer layers. This results in faster task completion and improved scalability, making it a practical solution for large-scale applications.

Hardware Efficiency

Engram’s reliance on hash-based lookups introduces several hardware optimizations that make it more accessible and cost-effective to deploy. These include:

- Reduced GPU Load: Lookup tables are stored in system RAM, alleviating the burden on expensive GPU memory and reducing overall hardware costs.

- Minimized Bottlenecks: Embeddings are pre-fetched during computation, making sure smooth and efficient processing without delays.

These hardware efficiencies lower the barriers to deploying large-scale LLMs, allowing organizations to use advanced AI capabilities without incurring prohibitive costs. This makes Engram particularly appealing for industries that require scalable and efficient AI solutions.

Limitations to Consider

While Engram offers numerous advantages, it is not without its challenges. Some of the key limitations include:

- Hash Collisions: The use of hash-based lookups can introduce inaccuracies, particularly in tasks requiring high precision or unique data retrieval.

- Static Lookup Tables: The reliance on pre-trained lookup tables limits the model’s ability to adapt dynamically during inference, reducing flexibility in certain scenarios.

- N-Gram Constraints: The dependence on n-grams may hinder the model’s ability to capture complex patterns or relationships in input data.

- Specialized Domains: Engram’s performance in niche or highly specialized areas remains untested, leaving room for further exploration and refinement.

These limitations highlight areas where additional research and development could enhance Engram’s capabilities, making sure its applicability across a broader range of use cases.

Broader Implications

Engram’s design draws inspiration from human cognition, mirroring the separation between fast, automatic recall (System 1) and deliberate, effortful reasoning (System 2). This approach represents a significant evolution in LLM architecture, akin to the introduction of attention mechanisms in earlier models. By aligning computational methods with task requirements, Engram reduces costs and improves scalability, making advanced AI more accessible to a wider audience. Its influence could extend beyond LLMs, shaping future innovations in AI by emphasizing efficiency, task-specific processing, and resource optimization.

Media Credit: Prompt Engineering

Filed Under: AI, Technology News, Top News

Latest Geeky Gadgets Deals

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Credit: Source link