What if the fragmented world of open AI models could finally speak the same language? Sam Witteveen explores how the newly introduced “Open Responses” is a new and open inference standard. Initiated by OpenAI and built by the open source AI community, with backing from the Hugging Face ecosystem. Open Responses is based on the Responses API and is designed for the future of Agents. For years, the lack of standardization has been a thorn in the side of AI innovation, forcing developers to wrestle with inconsistent APIs and compatibility headaches. But with features like multimodal inputs and reasoning token support, Open Responses promises to unify this chaotic ecosystem, making it easier than ever to build, scale, and innovate with AI.

In this overview, we’ll break down what makes Open Responses a fantastic option and why it’s already gaining traction among major players like Hugging Face and Vercel. You’ll discover how its streamlined design eliminates complexity, fosters interoperability, and enables developers to focus on creating impactful solutions rather than navigating technical barriers. Whether you’re curious about its advanced features, its implications for the future of AI, or how it stacks up against other APIs, this guide will unpack it all. The question isn’t whether Open Responses will change the game, it’s how soon you’ll start seeing its impact.

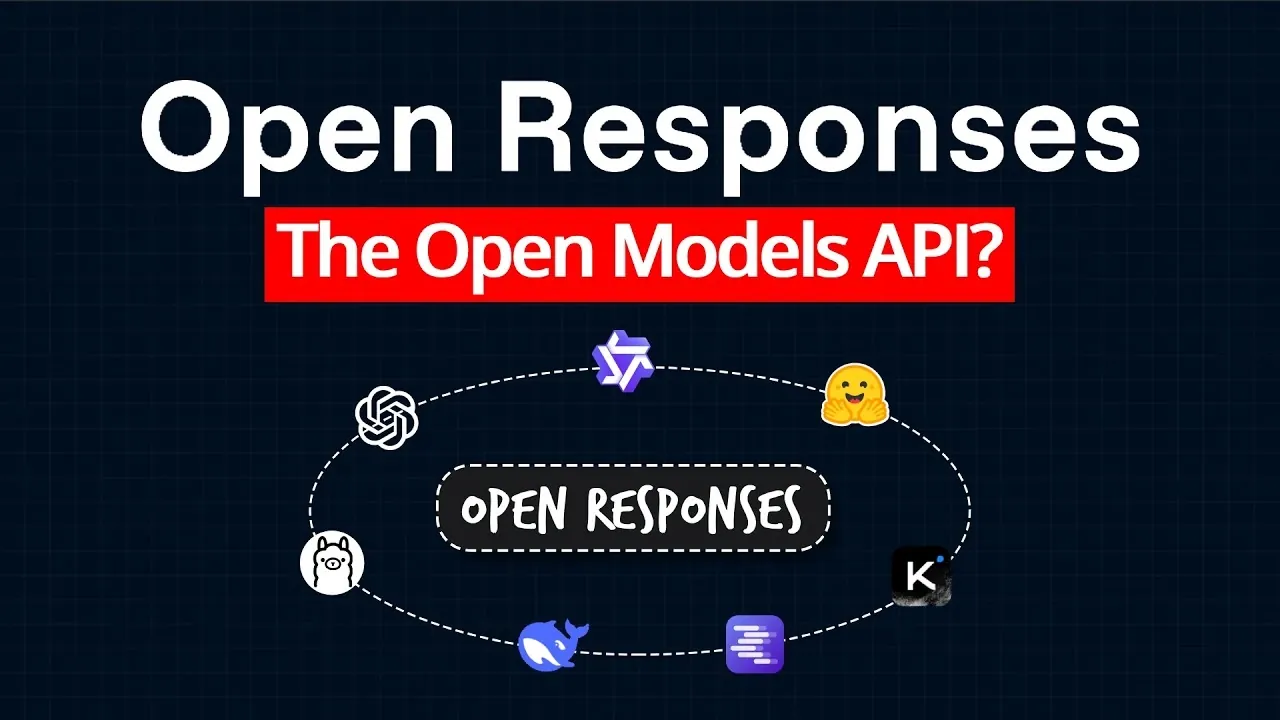

Open Responses API Overview

TL;DR Key Takeaways :

- The Open Responses API introduces a unified framework for interacting with open models, addressing challenges like compatibility, usability, and scalability.

- Key features include multimodal input support, reasoning token handling, real-time streaming, and extensibility, simplifying workflows and enhancing efficiency for developers.

- It fosters interoperability between proprietary and open models, encouraging collaboration and innovation across the AI ecosystem.

- Early adoption by major players like Hugging Face, Vercel, and LM Studio highlights its potential to standardize and unify the fragmented open model landscape.

- Open Responses is designed to adapt to future needs, positioning itself as a forward-thinking solution for developers and a cornerstone for the next generation of AI applications.

The Purpose and Vision Behind Open Responses

The Open Responses API was developed to establish a universal standard for interacting with open models, offering a streamlined and consistent approach for developers. Its primary objective is to reduce the complexity associated with managing multiple APIs, each with unique requirements. By providing a standardized framework, Open Responses enables developers to:

- Integrate tools and features seamlessly: Support for multimodal inputs, agentic loops, and other advanced functionalities.

- Focus on innovation: Developers can concentrate on building AI-driven solutions without the need to adapt to varying APIs.

- Enhance efficiency: A consistent interface accelerates development workflows and reduces overhead.

This initiative is particularly relevant in modern AI applications, where interoperability and extensibility are critical for success. By addressing these needs, Open Responses lays the groundwork for a more unified and collaborative AI ecosystem.

Overcoming Challenges in the Open Model Ecosystem

The open model ecosystem has long been hindered by a lack of standardization, creating barriers for developers who need to integrate multiple models. Each model often requires unique handling of features such as reasoning tokens, summaries, and tool integration. Open Responses addresses these challenges by introducing:

- Standardized Interactions: A unified API design that simplifies the integration process.

- Interoperability: Compatibility between proprietary and open models, fostering collaboration across platforms.

- Scalability: A flexible framework that evolves with the needs of developers without disrupting existing implementations.

By resolving these issues, Open Responses not only reduces the technical complexity of working with open models but also encourages innovation by allowing developers to focus on creating impactful solutions.

Open Responses a New Standard API for Open Models

Uncover more insights about Open AI Models in previous articles we have written.

Adoption and Industry Collaboration

The Open Responses API has already garnered significant support from key players in the AI industry. Early adopters include organizations such as Hugging Face, Vercel, Open Router, LM Studio, Olama, and VLLM. This widespread adoption underscores the API’s potential to unify the fragmented landscape of open models. By fostering collaboration between developers and model providers, Open Responses is paving the way for a more cohesive and efficient ecosystem.

Key Features That Set Open Responses Apart

The Open Responses API introduces a range of features designed to enhance its versatility and usability for developers. These include:

- Streaming: Real-time data processing for dynamic and responsive interactions.

- Tool Choice: Easy integration of tools tailored to specific use cases, improving flexibility.

- Reasoning Token Handling: Simplified management of reasoning processes across different models.

- Summaries: Built-in support for generating concise and accurate summaries, saving time and effort.

- Extensibility: A forward-compatible design that adapts to future needs without disrupting current implementations.

These features make Open Responses a valuable tool for a wide range of applications, from research and experimentation to large-scale production deployments.

Comparison with Other APIs

Open Responses distinguishes itself from other APIs, such as Claude and Anthropic, by focusing on unifying the fragmented approaches within the open model ecosystem. While these established APIs offer robust capabilities, Open Responses emphasizes interoperability and extensibility, making it a forward-thinking solution for developers and model providers alike. Its ability to bridge the gap between proprietary and open models positions it as a key player in the evolving AI landscape.

Implementation and Testing Across Platforms

The Open Responses API has undergone rigorous testing to ensure compatibility with a variety of open models, including Llama and GPT OSS. It supports both local and cloud-based inference setups, offering flexibility for developers working in diverse environments. Whether deploying self-hosted models or using cloud infrastructure, Open Responses provides a consistent and reliable interface, making it an adaptable solution for different use cases.

Implications for the Future of AI

The introduction of Open Responses has far-reaching implications for the AI industry. As adoption increases, it is expected to influence how model providers operate, potentially transforming them into system providers offering comprehensive, end-to-end solutions. Its compatibility with tools like Claude Code and the Anthropic API further highlights its potential to become a cornerstone of broader AI ecosystems. By simplifying workflows and fostering innovation, Open Responses is poised to play a pivotal role in shaping the next generation of open models.

What Developers Can Look Forward To

For developers, Open Responses offers a streamlined and intuitive experience. Its design builds on familiar concepts from existing OpenAI APIs, making sure accessibility for those with prior experience. The API’s support for tools like Olama and Hugging Face enhances its appeal, providing a robust foundation for experimentation, deployment, and scaling. Developers can expect a more efficient workflow, allowing them to focus on creating impactful AI solutions.

Driving the Future of Open Models

The Open Responses API represents a significant step forward in standardizing interactions with open models. By addressing critical challenges, fostering collaboration, and allowing advanced features, it has the potential to unify the ecosystem and drive innovation. As adoption continues to grow, Open Responses is well-positioned to become the new standard for open model APIs, simplifying development processes and unlocking new possibilities for AI applications.

Media Credit: Sam Witteveen

Filed Under: AI, Technology News, Top News

Latest Geeky Gadgets Deals

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Credit: Source link