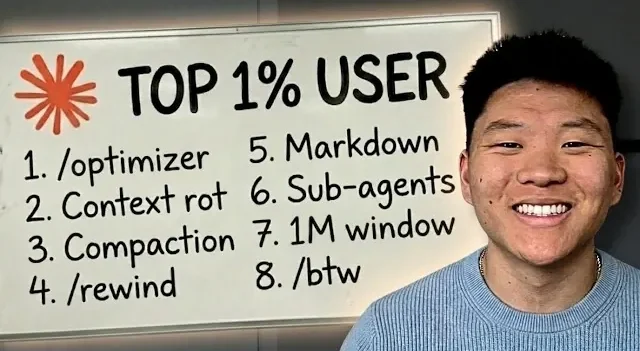

Managing token usage is crucial for avoiding session limits when working with Claude, as explained by Nate Herk. One key detail he highlights is how Claude processes conversation history, rereading the entire context with each interaction. This can lead to excessive token consumption, especially in longer sessions. By applying strategies like manual compaction, where you summarize and reset the context once it reaches about 60% of the token window, you can maintain clarity while preventing inefficiencies.

Explore practical methods to optimize your sessions and reduce token usage. Learn how to use session chaining to divide large projects into smaller, manageable tasks and how sub-agents with fresh context windows can handle specific assignments effectively. Gain insight into actionable techniques like converting files into markdown to conserve tokens and using commands such as /re and /btw to streamline context management.

TL;DR Key Takeaways :

- Tokens are the fundamental units of text processed by Claude and managing them effectively is crucial to avoid inefficiencies and session limits.

- Long sessions can lead to “context rot” (reduced accuracy over time) and “auto compaction” (loss of critical details when nearing token limits).

- Best practices for token management include manual compaction, session chaining and using sub-agents to optimize workflows and reduce token consumption.

- Practical tips to minimize token usage include monitoring session limits, converting files to markdown, using concise prompts and using tools like /re and /btw.

- Adopting a disciplined approach, such as focusing on smaller context windows and avoiding overloading the token window, ensures better performance, reduced costs and higher-quality outputs.

What Are Tokens and Context?

Tokens are the basic building blocks of text that Claude processes, encompassing letters, numbers and symbols. Each interaction with Claude involves rereading the entire conversation history, which increases token usage as the session progresses. Context, on the other hand, refers to all the information visible to Claude during a session, including prompts, conversations and any integrated tools or datasets.

The challenge lies in balancing the need for detailed context with the constraints of token limits. As sessions grow longer, token consumption rises, increasing the likelihood of hitting session limits. Poor context management can lead to inefficiencies, errors and diminished performance. By understanding these dynamics, you can take proactive steps to optimize your workflows.

Why Long Sessions Can Be Problematic

Extended sessions present two primary challenges that can hinder productivity:

- Context Rot: Over time, Claude’s ability to retrieve and process relevant information diminishes. This phenomenon, known as context rot, can result in reduced accuracy and slower response times, ultimately affecting the quality of outputs.

- Auto Compaction: When token usage approaches 95% of the limit, Claude automatically summarizes the context to prevent exceeding the limit. While this feature is helpful, it often leads to the loss of critical details, which can degrade the overall effectiveness of the session.

To address these challenges, it is essential to adopt strategies that prioritize efficient token and context management.

Gain further expertise in Claude by checking out these recommendations.

Best Practices for Managing Tokens

Implementing intentional strategies can help you optimize token usage and maintain session efficiency. Consider the following approaches:

- Manual Compaction: Regularly summarize and reset the context when it reaches approximately 60% of the token window. This practice ensures that essential details are preserved while maintaining efficiency and clarity.

- Session Chaining: Break large projects into smaller, focused sessions. For example, separate sessions can be used for discovery, planning and execution phases. This approach reduces token consumption and enhances clarity by keeping each session targeted and manageable.

- Sub-Agents: Assign specific tasks to sub-agents with fresh context windows. This method is particularly effective for routine or isolated tasks, especially when using less expensive models, as it minimizes token usage while maintaining productivity.

Practical Tips to Reduce Token Usage

In addition to the best practices outlined above, the following tips can help streamline workflows and minimize token consumption:

- Monitor session limits frequently and adjust your workflows as necessary to avoid nearing the token cap.

- Convert files such as PDFs or HTML into markdown format to significantly reduce token usage during processing.

- Use concise prompts and avoid including unnecessary context to keep interactions efficient and focused.

- Use tools like /re to rewind and clean up context or /btw to handle side questions without cluttering the session.

Tools and Frameworks for Token Management

Several tools and frameworks are available to help you manage tokens and context more effectively. These resources can enhance your workflows and improve overall efficiency:

- Custom dashboards that track token usage in real time, allowing you to identify inefficiencies and make adjustments as needed.

- GitHub repositories offering token optimization frameworks, such as Rust Token Killer and Context Mode, which are designed to streamline workflows and reduce token consumption.

- Scripts specifically developed for session handoffs and context management, allowing smoother transitions between tasks and reducing the risk of errors.

Key Insights on Token Usage

Understanding the impact of token usage on performance is crucial for effective management. Consider these key insights:

- Longer sessions often lead to reduced depth of thinking and an increased likelihood of errors, as Claude’s ability to process information diminishes over time.

- Retrieval accuracy declines significantly as token usage approaches the maximum limit, making it essential to manage context proactively.

- Effective context management not only improves performance but also reduces costs, making sure high-quality outputs without unnecessary expenses.

Adopting a Disciplined Approach

A disciplined approach to token and context management can make a significant difference in your workflows. By developing intentional habits, you can optimize performance and avoid hitting session limits:

- Avoid treating the 1 million token window as a target. Instead, use it as a buffer to ensure smooth operations and prevent overloading the system.

- Focus on the first 20% of the session, where performance is typically at its peak. This allows you to maximize efficiency and accuracy early on.

- Start with smaller context windows to develop disciplined habits before scaling up to more complex workflows. This approach helps build a strong foundation for effective token management.

By implementing these strategies and maintaining a disciplined approach, you can optimize token usage, improve efficiency and ensure high-quality outputs while avoiding session limits. Whether through manual compaction, session chaining, or using tools and frameworks, effective management of tokens and context is essential for unlocking the full potential of Claude.

Media Credit: Nate Herk | AI Automation

Filed Under: AI, Guides

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Credit: Source link